A radiology department at 3am is quieter than its daytime self. The reporting rooms empty out. The radiologists go home.

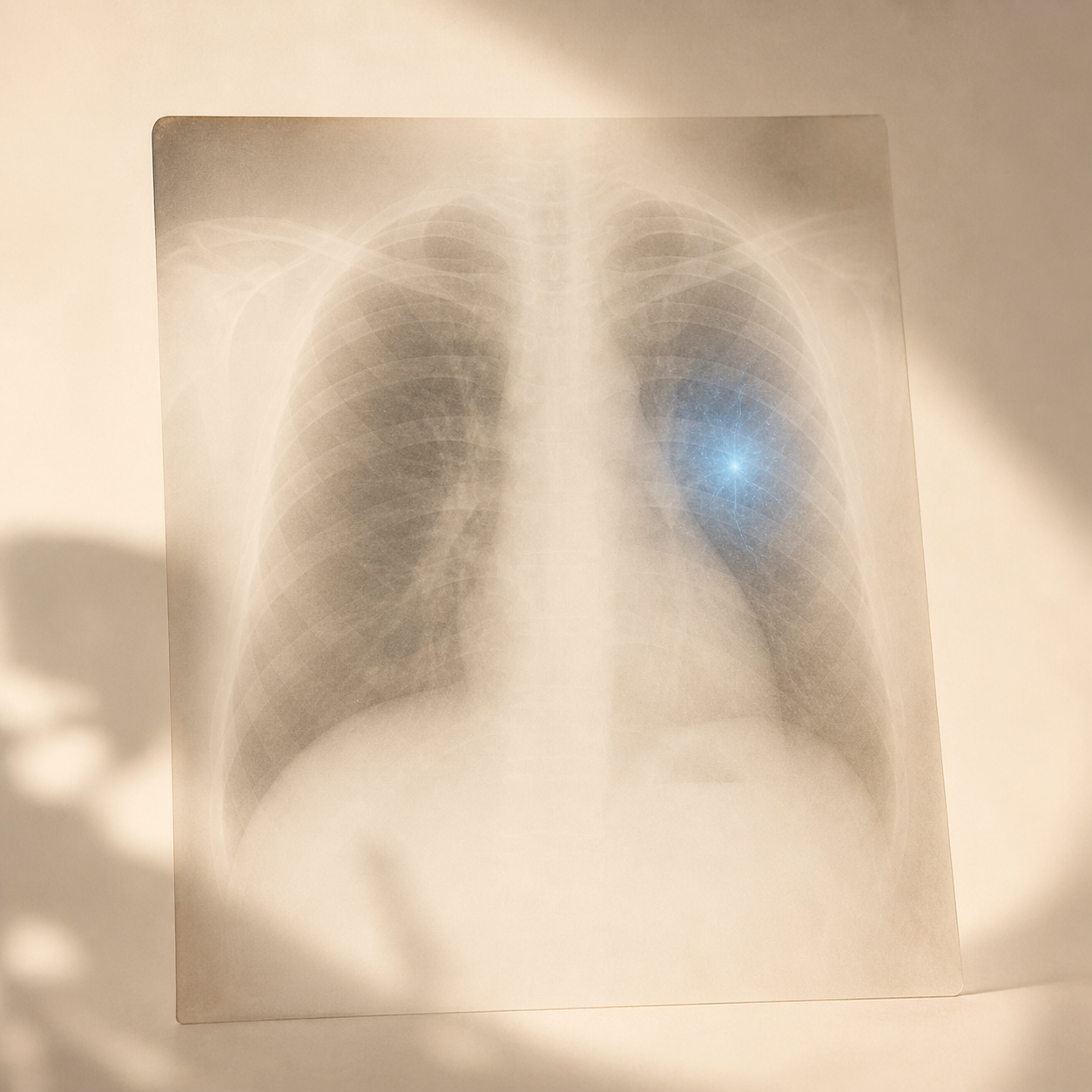

But the scanners don't stop. Emergency CT scans, overnight chest X-rays, suspected stroke MRIs — they keep producing images. Images that need a qualified human to interpret them before any decision can be made.

In hospitals that have deployed radiology AI, that gap is shrinking fast. The algorithm reads the scan the moment it's taken. If it spots something serious — a collapsed lung, a brain bleed, a suspicious shadow — it flags the image straight to the top of the queue. A clinician is alerted within minutes. What used to wait until morning gets reviewed now.

This isn't theoretical. It's operational across hundreds of hospitals worldwide. Worth understanding what it actually does.

What "AI in radiology" actually means

The term covers three different things:

Triage. The most common and least controversial. The algorithm scans every image as it comes in and pushes the urgent ones to the front of the queue. A radiologist still reads them — the AI just decides who reads what first. The impact on time-to-diagnosis for things like brain bleeds is real: 15 to 30 minutes saved on average.

Detection. The algorithm spots specific findings — a lung nodule, diabetic damage to the retina, a fracture. Studies show its accuracy on chest X-rays is broadly comparable to a trained radiologist, with caveats about which populations the AI was trained on (more on that below).

Report generation. The newest and most contested. AI drafts a preliminary report — findings, measurements, possible explanations. A human radiologist still reviews and signs it off. It frees capacity for complex cases by speeding up routine normals.

What the evidence shows — and where it doesn't

The honest picture:

- AI triage works. Documented faster reporting of urgent findings across multiple hospital systems.

- AI detection of lung nodules on CT is at least as good as a radiologist, sometimes better for very small nodules.

- AI works best on data that looks like its training set. Most tools were trained on North American and European populations and perform less well on South Asian, East Asian, or African patients. This is a real, documented bias — not a theoretical concern.

- False positives aren't free. An AI flagging a "nodule" that isn't really there triggers follow-up imaging, conversations about cancer risk, and a lot of anxiety. The cost of being wrong is not zero.

Who's responsible if the AI misses something?

The clear answer, across virtually every health system: the human is.

AI tools in clinical use are decision support, not autonomous diagnosticians. The radiologist who signs the report is clinically and legally responsible for what it says — whether they wrote it from scratch or edited an AI draft.

It's the same logic as an ECG machine that produces an automated reading. If the doctor misreads it, the doctor is responsible. The machine is a tool.

What this means for you

Two reasonable questions you can ask:

- "Was AI used in interpreting my scan?" Most hospitals can give you a straightforward answer. You're entitled to know.

- "Was the result confirmed by a human radiologist?" The honest answer should always be yes for any clinical decision. AI flags. Humans confirm.

The structural problem is real — there are too many scans and not enough radiologists, almost everywhere. AI doesn't solve that. It doesn't replace training more radiologists. But used well, it's already reducing time-to-diagnosis for serious conditions. That's worth understanding, and worth asking about.

Curiosity first. — Dr. Brugal